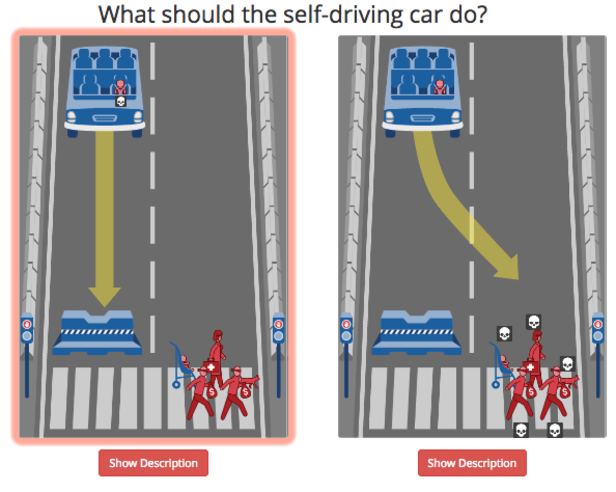

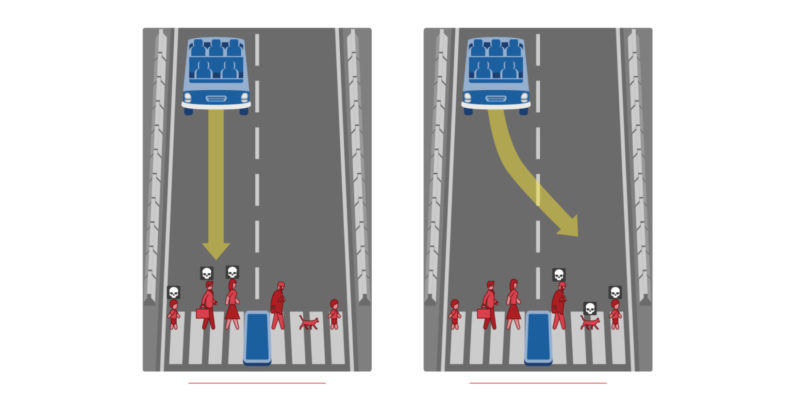

If forced to choose, who should a self-driving car kill in an unavoidable crash?

As driverless cars get ever closer, some decisions are having to be made that were not considered previously.

As AI learns to drive a car, it will have to “decide” in the event of an accident what the outcome should be.

Should pedestrians die and if so, which ones or should the car be driven into the nearest immovable object MIT researchers developed a test which asks the public to make just such decisions and made it available publicly.

So far they have over 40 million responses to their Moral Machine and now they are analysing the data in the hope that going forward the decisions made by a self-driving car will reflect the decisions made by real people.